Hi there! I'm a second-year PhD candidate (Aug. 2024 - ) at School of Computing, NUS, advised by Prof. Lin Shao. I am supported by the President's Graduate Fellowship (PGF).

I obtained my B.Eng. (Sep. 2020 - Jun. 2024) in Robotics 🤖 from Zhejiang University, where I had the privilege of working with Kechun Xu, Prof. Rong Xiong, and Prof. Yue Wang.

My research focuses on dexterous robotic manipulation with scalable learning, cross-embodiment generalization, and tactile sensing. 🦾

News- Jan 2026 \(\mathcal{T(R,O)}\) Grasp accepted to ICRA 2026.

- Dec 2025 Research Achievement Award (2025 - 2026), NUS School of Computing.

- Dec 2025 Passed PhD qualification exam — officially a PhD candidate!

- Nov 2025 DexSinGrasp accepted to RA-L.

- Jun 2025 MetaFold accepted to IROS 2025 as Oral Presentation!

- May 2025 \(\mathcal{D(R,O)}\) Grasp selected as Best Paper Award on Robot Manipulation and Locomotion @ ICRA 2025. TelePreview selected as Best Paper Award @ ICRA 2025 Workshop on Human-Centric Multilateral Teleoperation.

- Apr 2025 \(\mathcal{D(R,O)}\) Grasp selected as the Finalist of Best Paper Award & Best Paper Award on Robot Manipulation and Locomotion @ ICRA 2025.

- Jan 2025 \(\mathcal{D(R,O)}\) Grasp accepted to ICRA 2025.

- Jan 2025 FLIP accepted to ICLR 2025.

- Nov 2024 \(\mathcal{D(R,O)}\) Grasp selected as Best Robotics Paper Award @ CoRL 2024 Workshop MAPoDeL!

- Aug 2024 I am honored to funded by President's Graduate Fellowship (PGF).

- Jun 2024 ManiFM accepted to IROS 2024 as Oral Presentation!

- Aug 2023 Diff-LfD accepted to CoRL 2023 as Oral Presentation!

Research Highlights

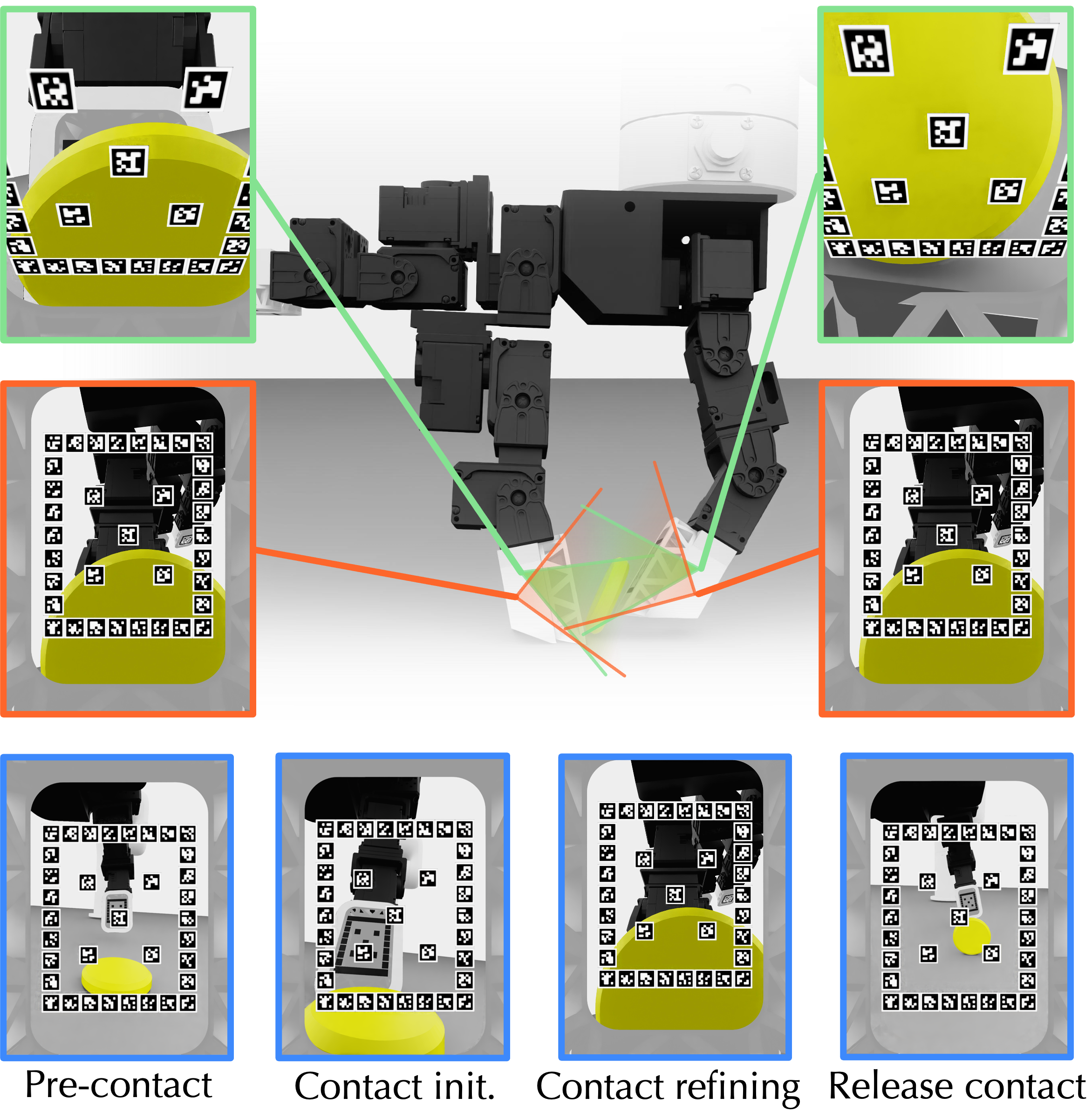

FingerEye: Learning Dexterous Manipulation with Continuous Vision-Tactile Sensing

In Submission

FingerEye bridges seeing and touching: fingertip binocular RGB guides contact alignment, compliant-ring deformation provides a contact-wrench proxy, and grouped fusion preserves local contact cues.

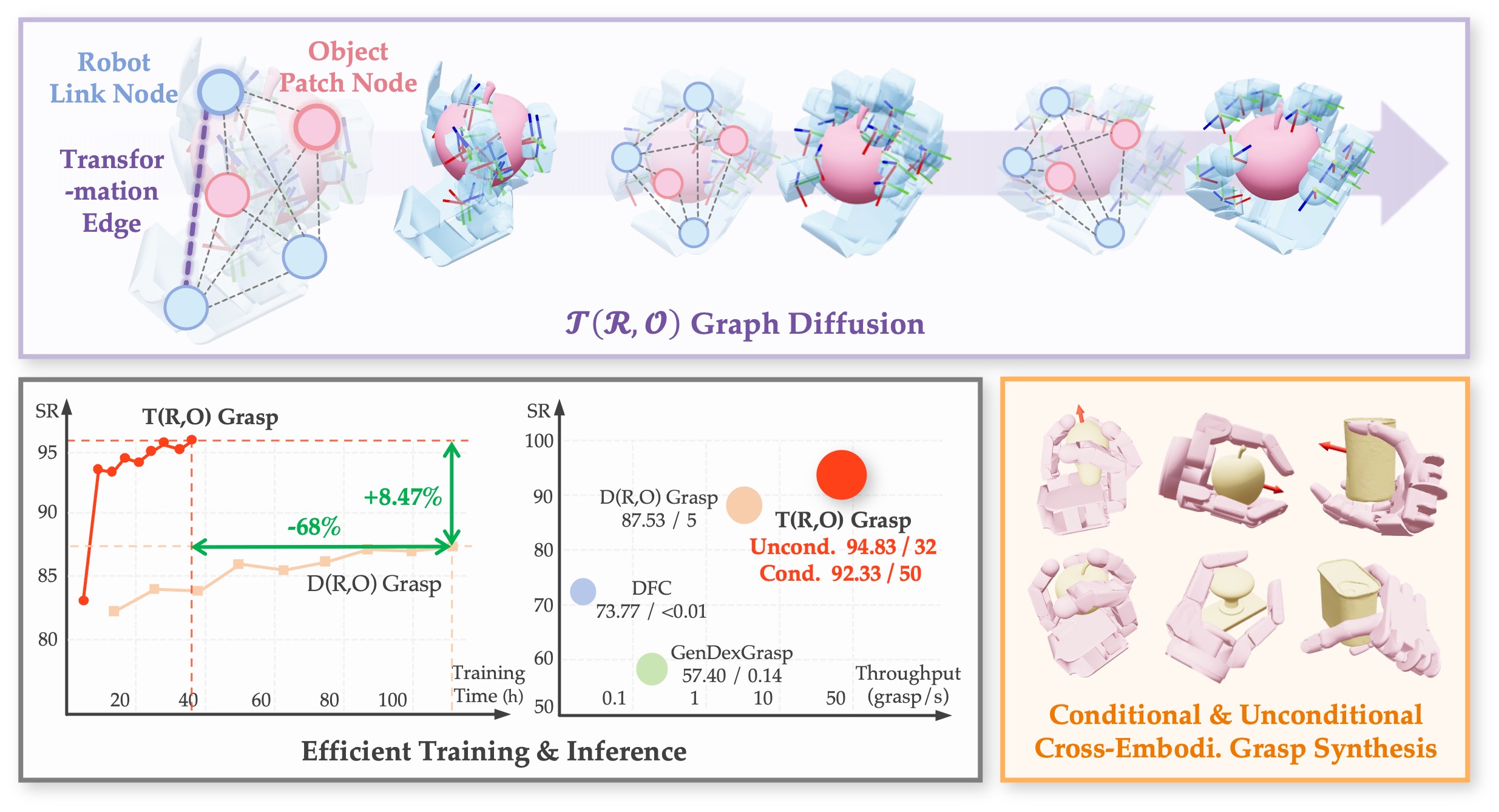

\(\mathcal{T(R,O)}\) Grasp: Efficient Graph Diffusion of Robot-Object Spatial Transformation for Cross-Embodiment Dexterous Grasping

International Conference on Robotics and Automation (ICRA) 2026

\(\mathcal{T(R,O)}\) Grasp is a diffusion-based framework with a unified hand-object graph representation for fast, accurate, and embodiment-general dexterous grasp synthesis, achieving 94.83% success at 0.21 s inference.

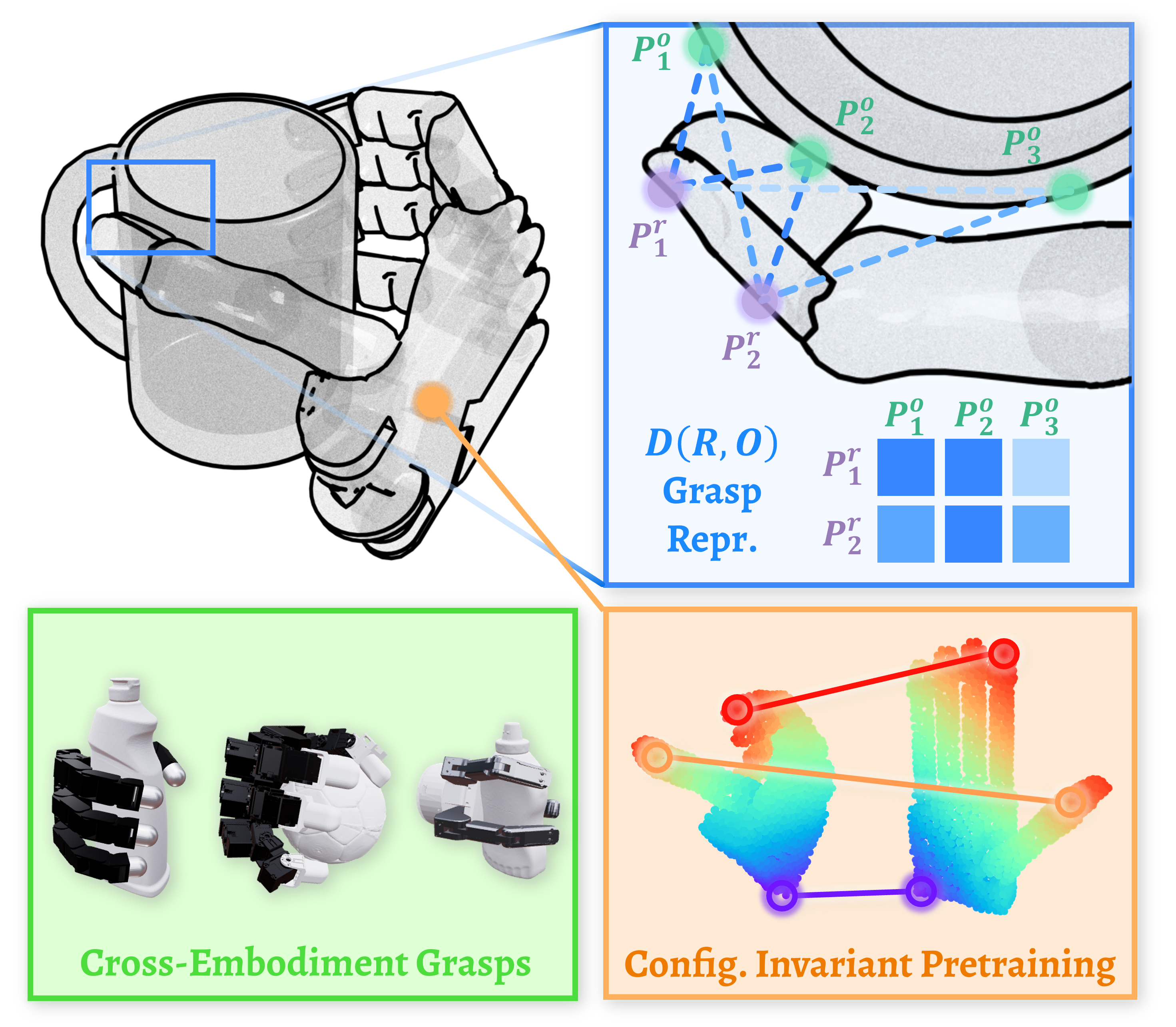

\(\mathcal{D(R,O)}\) Grasp: A Unified Representation of Robot and Object Interaction for Cross-Embodiment Dexterous Grasping

International Conference on Robotics and Automation (ICRA) 2025

Best Paper Award on Robot Manipulation and Locomotion @ ICRA 2025

Best Paper Award Finalist @ ICRA 2025

Best Robotics Paper Award @ CoRL 2024 Workshop MAPoDeL

Introduce a novel representation, \(\mathcal{D(R,O)}\) for dexterous grasping tasks. This interaction-centric formulation transcends conventional robot-centric and object-centric paradigms, facilitating generalization across diverse robotic hands and objects.

ManiFoundation Model for General-Purpose Robotic Manipulation of Contact Synthesis with Arbitrary Objects and Robots

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2024

Oral Presentation

Introduce a framework taking contact synthesis as a unified task representation that can generalizes over objects, robots, and manipulation tasks.

Collaborative Projects

Contact Coverage-Guided Exploration for General-Purpose Dexterous Manipulation

In Submission

A general RL exploration reward term for dexterous manipulation using contact coverage guidance. Encourages discovering diverse contact patterns and demonstrates improved training efficiency.

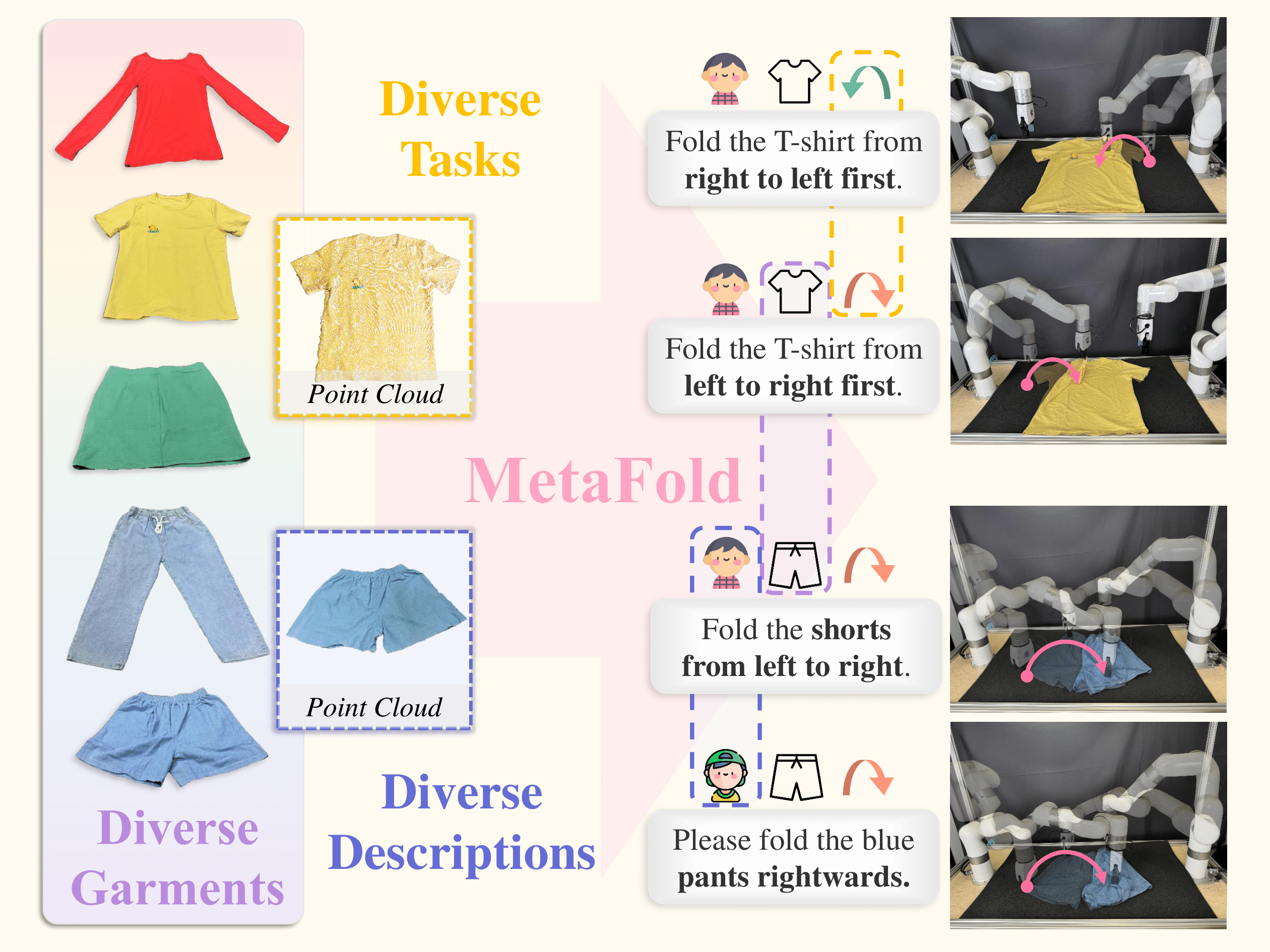

MetaFold: Language-Guided Multi-Category Garment Folding Framework via Trajectory Generation and Foundation Model

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2025

Oral Presentation

Disentangle folding tasks into language-guided point cloud trajectory generation and low-level action prediction.

TelePreview: A User-Friendly Teleoperation System with Virtual Arm Assistance for Enhanced Effectiveness

Best Paper Award @ ICRA 2025 Workshop on Human-Centric Teleoperation

Spotlight Presentation @ ICRA 2025 Workshop on Human-Centric Teleoperation

Implement a low-cost teleoperation system utilizing data gloves and IMU sensors, paired with an assistant module that improves data collection process by visualizing future robot operations through visual previews.

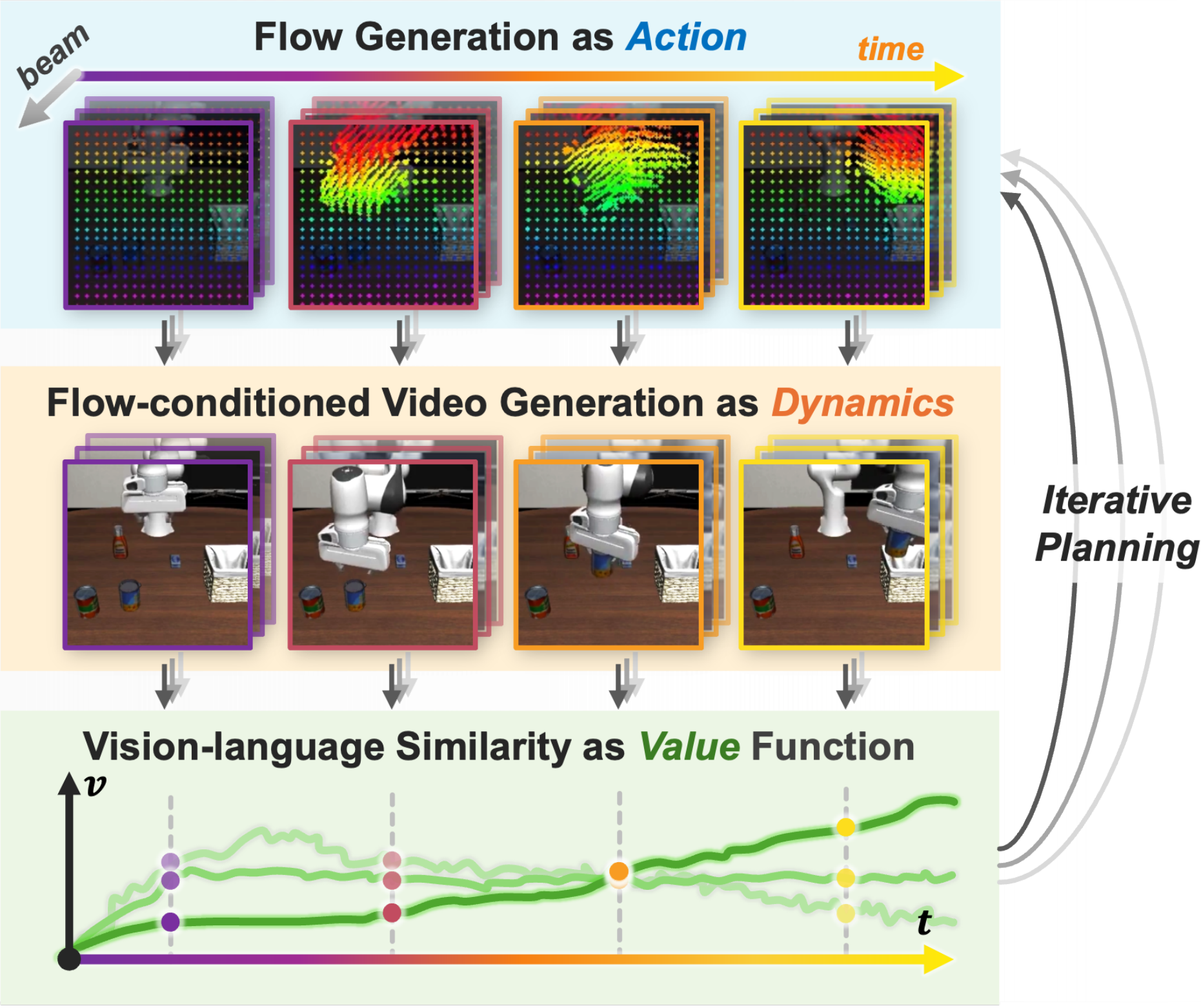

FLIP: Flow-Centric Generative Planning for General-Purpose Manipulation Tasks

International Conference on Learning Representations (ICLR) 2025

Oral Presentation @ CoRL 2024 Workshop LEAP

Propose flow-centric generative planning (FLIP) as an interactive world model for general-purpose model-based planning for manipulation tasks.

Diff-LfD: Contact-aware Model-based Learning from Visual Demonstration for Robotic Manipulation via Differentiable Physics-based Simulation and Rendering

Conference on Robot Learning (CoRL) 2023

Oral Presentation

Propose a pipeline to learn dexterous manipulation from human video demonstrations. It includes self-supervised pose and shape estimation via differentiable rendering and contact sequence generation via differentiable simulation.

Alumni

Jingxiang Guo (intern 2024), PhD student, National University of Singapore

Zhenyu Wei (intern 2024), PhD student, University of North Carolina at Chapel Hill

Services

Conference Reviewer

- IEEE International Conference on Robotics and Automation (ICRA)

- IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)

Journal Reviewer

- IEEE Transactions on Robotics (T-RO)

- IEEE Robotics and Automation Letters (RA-L)

- IEEE/ASME Transactions on Mechatronics (TMECH)

Teaching

- Teaching Assistant, CS4278/CS5278 Intelligent Robots: Algorithms and Systems, NUS, Fall 2025. Course Project, PyBullet Tutorial.